About to the EEMBC MultiBench™ Multicore Benchmark Suite

Read the Microprocessor Report's review of MultiBench!

MultiBench™ is a suite of benchmarks that allows processor and system designers to analyze, test, and improve multicore processors. It uses three forms of concurrency:

- Data decomposition: multiple threads cooperating on achieving a unified goal and demonstrating a processor’s support for fine grain parallelism.

- Processing multiple data streams: uses common code running over multiple threads and demonstrating how well a processor scales over scalable data inputs.

- Multiple workload processing: shows the scalability of general-purpose processing, demonstrating concurrency over both code and data.

MultiBench combines a wide variety of application-specific workloads with the EEMBC Multi-Instance-Test Harness (MITH), compatible and portable with most any multicore processors and operating systems. MITH uses a thread-based API (POSIX-compliant) to establish a common programming model that communicates with the benchmark through an abstraction layer and provides a flexible interface to allow a wide variety of thread-enabled workloads to be tested.

With literally dozens of workloads, MultiBench may seem a bit daunting. This MultiBench workload datasheet walks you through each kernel with a detailed description of its operation and parameters.

Find the strengths and weaknesses of any multicore processor and system:

- Analyze scalable multicore architectures, memory bottlenecks, thread scheduling support, efficiency of synchronization

- Measures the impact of parallelization and scalability across both data processing and computationally intensive tasks

- Provides an analytical tool for optimizing programs for a specific processor

- Application-focused kernels in more than one hundred workload combinations

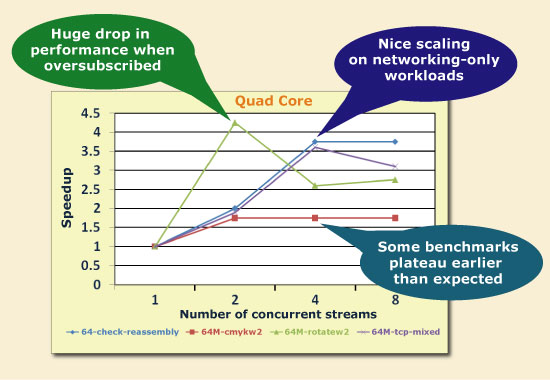

The 100+ MultiBench workloads can be individually parameterized to vary the amount of concurrency being implemented by the benchmark. By applying incrementally challenging and concurrent workloads, system designers can optimize programs for specific processors and systems, as well as assess the impact of memory bottlenecks, cache coherency, thread scheduling support, and efficiency of synchronization between threads. The wide variety of workloads support judicious monitoring of parameters that highlight the strengths and weaknesses of any multicore processor and system.

Even more information is available from this MultiCore Expo presentation: Multicore Benchmarks Help Match Programming to Processor Architecture.

Common Questions

Q: Can I run MultiBench without SMP linux (e.g. baremetal)?

A: MultiBench is intended to be run on a Linux-like OS, but it has been coded in a way where it could be ported to bare-metal by customizing the abstraction layer to schedule threads and implement a RAM drive for the input datasets.

Q: Can you explain the allocation of concurrent workloads/works and the relationship between them and the program?

A: The default scheduling mechanism is POSIX pthreads(). The workloads are launched using pthreads, and inside the workloads, the workers are also scheduled with pthreads. The OS will determine where to schedule. If you want to change that behavior, you need to customize the al_smp_threads file's functions.

Request more information from EEMBC.